Pupillometry Experiment on Spanish Subjunctive Processing: EyeLink Implementation and Psycholinguistic Analysis [Ongoing Project]

Overview

This project consists of the design, implementation, and deployment of a pupillometry-based psycholinguistic experiment investigating how bilingual speakers process Spanish subjunctive morphology in real time. The experiment was built using Experiment Builder and run on an EyeLink 1000 Plus system, integrating linguistic theory, experimental design, and technical implementation.

The project is part of my work in the Arizona Applied Psycholinguistics Lab (AAPL) at the University of Arizona, which studies how pupil dilation reflects cognitive load during sentence processing, particularly when participants encounter ungrammatical structures. This study extends that paradigm by incorporating additional experimental controls, including indicative mood baselines, lexical frequency manipulation, and nonce verbs.

Project Goals

More specifically, this project pursues the following goals:

- Test the Bottleneck Hypothesis in real-time processingA main objective is to evaluate predictions from the Bottleneck Hypothesis, which claims that functional morphology is a primary source of difficulty in second language acquisition. By focusing on the subjunctive, this project examines whether bilingual speakers show increased processing effort when encountering morphological violations, even when they may perform well on explicit tasks.

- Compare implicit and explicit knowledge of grammar

- Explicit knowledge (offline tasks, such as forced-choice judgments).

- Implicit processing (pupil dilation).

- Examine differences across speaker populations

- Another goal is to compare how different bilingual groups process subjunctive morphology:

- Native speakers (baseline).

- Heritage speakers.

- L2 learners.

- Another goal is to compare how different bilingual groups process subjunctive morphology:

- Measure cognitive load using pupillometryA methodological goal is to use pupil dilation as a continuous, real-time indicator of processing difficulty. The project tests whether ungrammatical sentences SHOULD HAVE greater pupil dilation which provides a more sensitive measure than traditional behavioral responses.

Technologies and Tools

System Architecture

The experiment was deployed using a dual-machine EyeLink setup and Experiment Builder:

- Host PC

- Handles eye tracking.

- Stores raw data (

.edf).

- Display PC

- Runs the experiment.

- Presents stimuli (audio + fixation).

- Records behavioral data (

.dat).

Stimulus Dataset

The dataset created includes the following sections:

- Trial structure variables (condition, block, list).

- Linguistic predictors (mood, grammaticality, verb type).

- Timing variables (onsets, durations).

Data Pipeline

The experiment produces two primary outputs:

- Eye-tracking data (

.edf): Pupil size, gaze, timestamps. - Behavioral data (

.dat): Reaction times, responses.

These datasets are later combined for statistical analysis.

Project Breakdown

1. Stimulus Dataset and Experimental Structure in Experiment Builder

The first stage of the project focuses on preparing the DataSource file used by Experiment Builder. This dataset is currently being developed to control the flow of the experiment and ensure that each trial is systematically linked to its corresponding linguistic condition.

The dataset is designed to include:

- Trial identifiers: item_id, condition, list.

- Linguistic variables:

- Mood: subjunctive vs indicative.

- Grammaticality: grammatical vs ungrammatical.

- Verb type: volitional vs epistemic.

- Audio file paths.

- Timing-related variables.

This dataset functions as the core control system of the experiment. By structuring the experiment in this way, I am separating experimental logic from presentation logic, which facilitates ongoing debugging and refinement.

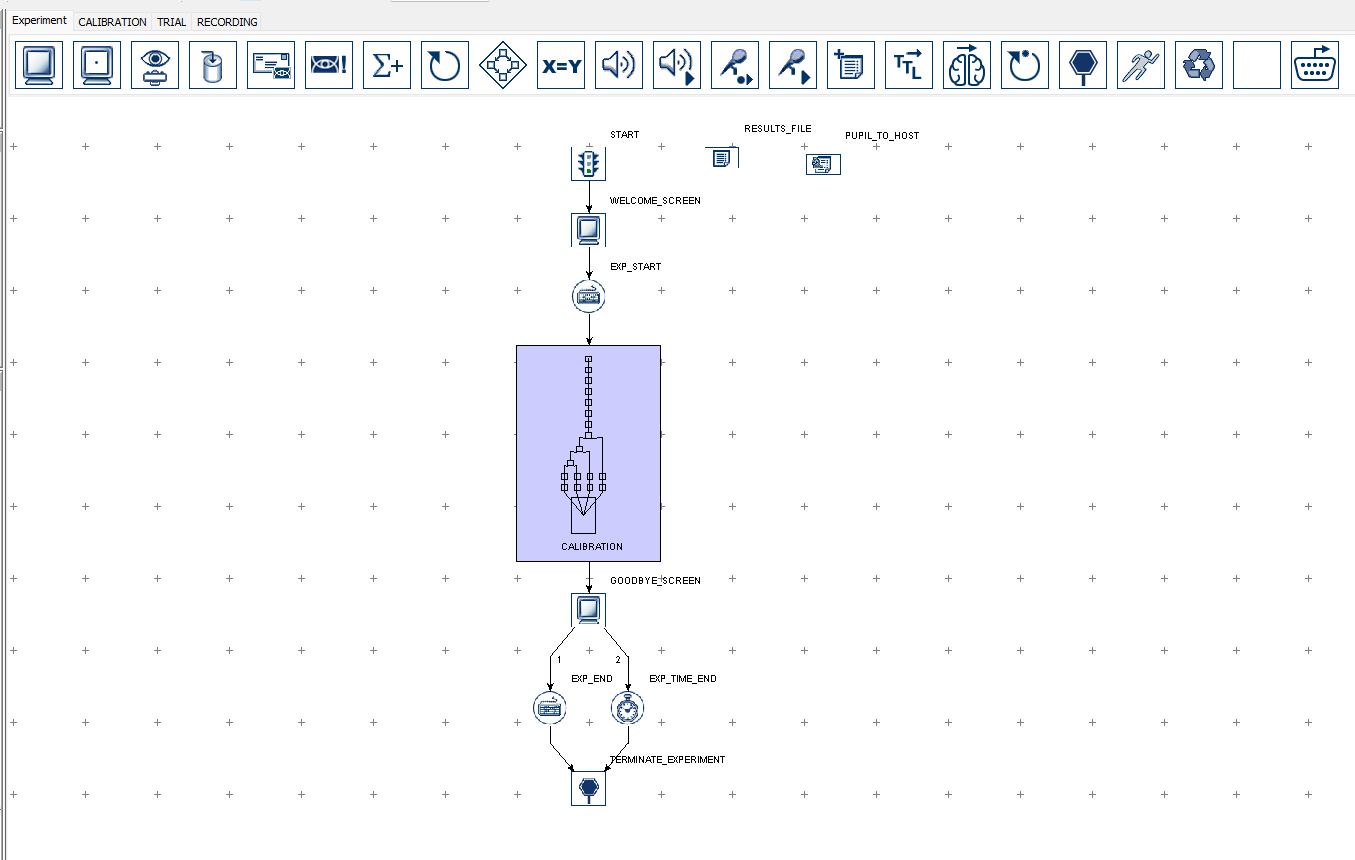

2. Experiment Builder Structure: Routines, Timeline, and Trial Flow

The experiment is being constructed inside Experiment Builder using a sequence of routines organized in the timeline. Each routine corresponds to a specific stage in the participant experience and is triggered dynamically based on the DataSource variables.

The current structure includes:

- Calibration and validation (EyeLink setup).

- Instruction screens.

- Trial loop (core experiment).

- Response collection.

- End screen.

Trial Structure

Each trial is designed to follow this sequence:

- Fixation cross.

- Audio playback (sentence stimulus).

- Pupil recording during playback.

- Acceptability judgment (button/key response).

Figure 1. Trial Structure.

This structure is implemented using a loop connected to the DataSource file, where each row corresponds to one trial. The system is currently being refined to ensure proper randomization and condition balancing.

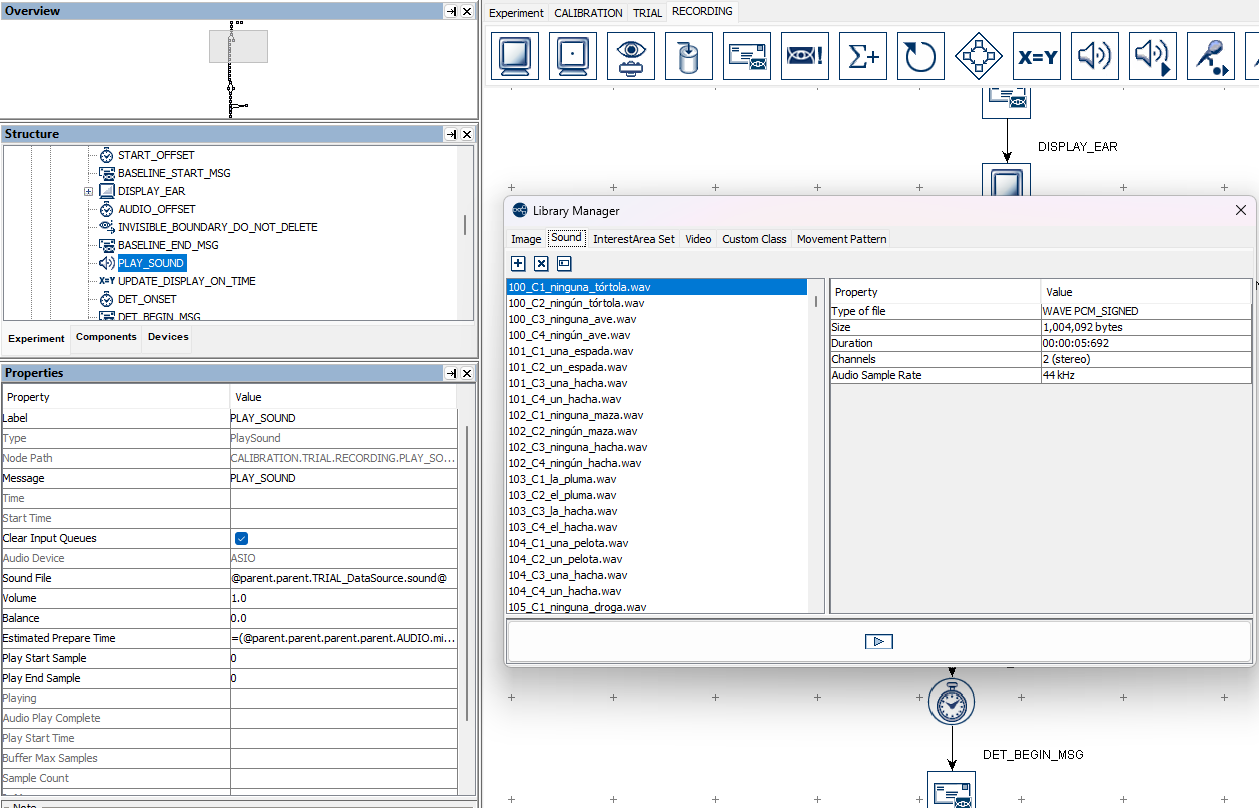

3. Audio Integration and Timing Control

A central component of the experiment involves integrating auditory stimuli with precise timing. Each sentence is presented as an audio file, and its playback must be tightly synchronized with eye-tracking recording.

Key implementation features include:

- Dynamic loading of audio files from the DataSource.

- Playback control within dedicated routines.

- Alignment between stimulus onset and recording events.

Figure 2. Audio Integration.

This part of the system is currently being tested and adjusted to minimize latency. Particular attention is being given to audio configuration (e.g., ASIO drivers) to ensure accurate temporal alignment.

4. EyeLink Integration and Data Recording

The experiment is being integrated with the EyeLink 1000 Plus system to record pupil size and gaze data. This requires coordinating the experiment flow with EyeLink-specific commands and ensuring reliable communication between the Display PC and the Host PC.

The current setup includes:

- Calibration and validation procedures prior to trials.

- Trial-level control of recording start/stop.

- Logging of events aligned with stimulus presentation.

Data INtegration snippet: This snippet defines a custom Experiment Builder class that interfaces with the EyeLink system via pylink. It manages trial-level state, periodically retrieves the newest eye-tracking sample, extracts pupil size data, and sends real-time status messages to the Host PC. The implementation ensures controlled timing of updates, enabling reliable monitoring of pupil dynamics during stimulus presentation.

import sreb

import sreb.time

import pylink

class CustomClassTemplate(sreb.EBObject):

def __init__(self):

sreb.EBObject.__init__(self)

self.updateDuration = 500

self.checkingStatus = 0

def resetTrial(self, trialNum):

self.startTime = 0

self.updatePupil = 0

self.checkingStatus = 0

self.trialNum = trialNum

def sendPupilTohost(self):

if self.checkingStatus == 1:

if self.startTime == 0:

self.lastUpdateTime = sreb.time.getCurrentTime()

self.updatePupil = 1

self.startTime = sreb.time.getCurrentTime()

elif sreb.time.getCurrentTime() - self.lastUpdateTime >= self.updateDuration:

self.lastUpdateTime = sreb.time.getCurrentTime()

self.updatePupil = 1

if self.updatePupil == 1:

s = pylink.getEYELINK().getNewestSample()

if s:

eyeData = s.getRightEye()

if eyeData is None:

eyeData = s.getLeftEye()

self.pupilSize = int(eyeData.getPupilSize())

pylink.getEYELINK().sendCommand(

"record_status_message 'Trial = " + str(self.trialNum) +

" Pupil Size = " + str(self.pupilSize) + "'"

)

self.updatePupil = 0

The system generates .edf files containing raw physiological data, which will later be synchronized with behavioral data for analysis.

5. Response Collection and Behavioral Data

Following each stimulus, participants provide an acceptability judgment using predefined keys. This component is currently implemented to capture:

- Key response.

- Reaction time.

- Trial condition.

These behavioral measures are designed to complement the pupil data, enabling comparisons between explicit responses and implicit processing effort.

Outcomes / Data Output

The experiment is designed to produce two complementary datasets:

- Behavioral data (

.dat) from Experiment Builder. - Eye-tracking data (

.edf) from EyeLink.

The .dat file contains trial-level variables such as condition, response, and timing, while the .edf file contains high-resolution physiological data. These datasets will be combined in later stages of the project.

Why This Implementation Matters

This component of the project demonstrates the ongoing process of translating a theoretical experimental design into a working eye-tracking experiment. The current implementation highlights the complexity of coordinating multiple systems while maintaining precise timing and experimental control.

From an HLT perspective, this work reflects the integration of:

- Linguistic theory to experimental variables.

- Structured datasets to experimental control.

- Hardware systems to real-time data collection.

As the project continues, further refinements will focus on improving stability, optimizing timing, and preparing the experiment for full-scale data collection and analysis.

For more detailed information about the project please check the following Repository.